OpenSSH is a tool that allows you to securely connect to a remote server using the SSH protocol. This tool encrypts all traffic between client and server to prevent eavesdropping, connection hijacking, and other attacks. Stay with us until the end of this post to teach you how to Boost Your Windows Server Security with OpenSSH.

Why use OpenSSH for Windows server security?

Some of the reasons to use OpenSSH are:

1) Free and open-source: You can review, modify, and distribute the source code under a BSD-style license.

2) Extensive support: integrates into multiple operating systems such as Microsoft Windows, macOS, Linux, and BSD.

3) Development and Improvement: It is continuously developed and improved by the OpenBSD team and the user community, who follow a policy of producing clean and audited code.

It is based on the original free version of SSH by Tatu Ylonen, which was the first to replace the insecure authentication of .rhosts with public key authentication. It offers various features and options such as tunneling, authentication methods, configuration options, X11 forwarding, SCP, SFTP, and more.

Installing OpenSSH on a Windows Server

Before we teach you how to install OpenSSH, we recommend you choose and use the Windows VPS server plans provided on our site. Installing OpenSSH on Windows Server is easy. To do this, you need to follow the steps below.

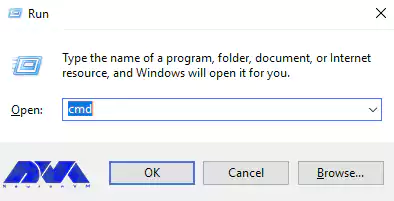

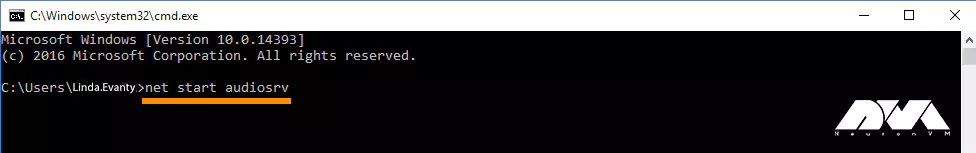

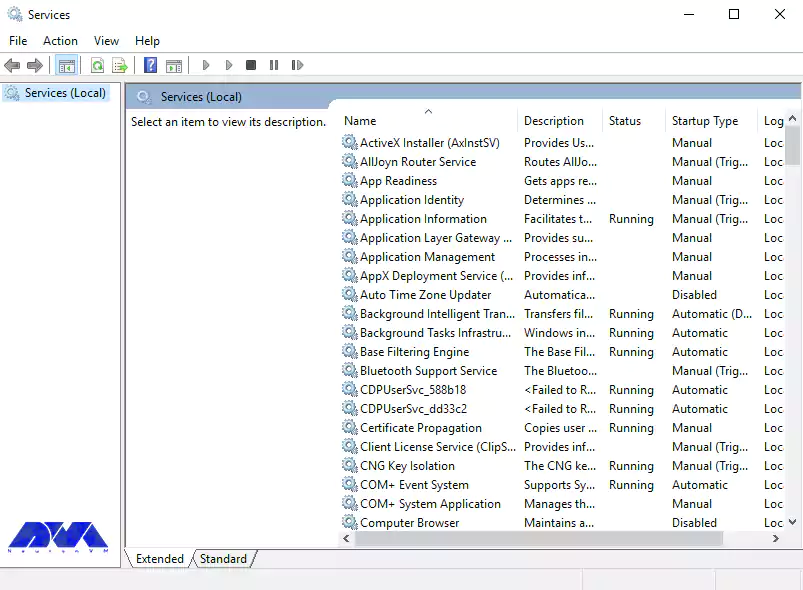

1) From the search section in the start menu, type PowerShell and run it.

2) Now you can install OpenSSH Server by running the following command in PowerShell:

Add-WindowsCapability -Online -Name OpenSSH.Server

Also, to install OpenSSH Client, you need to run the following command:

Add-WindowsCapability -Online -Name OpenSSH.Client

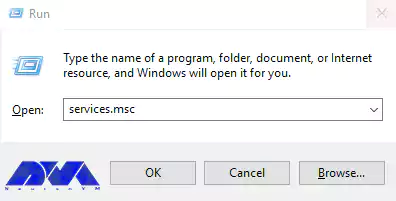

Configuring OpenSSH for Windows Server

In this section, we are going to show you the OpenSSH configuration steps. You can make the desired changes by running the following command in PowerShell:

start-process notepad C:\Programdata\ssh\sshd_config

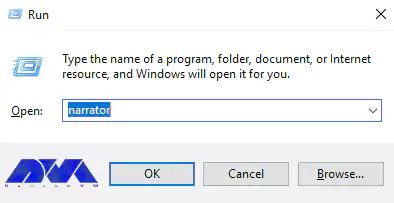

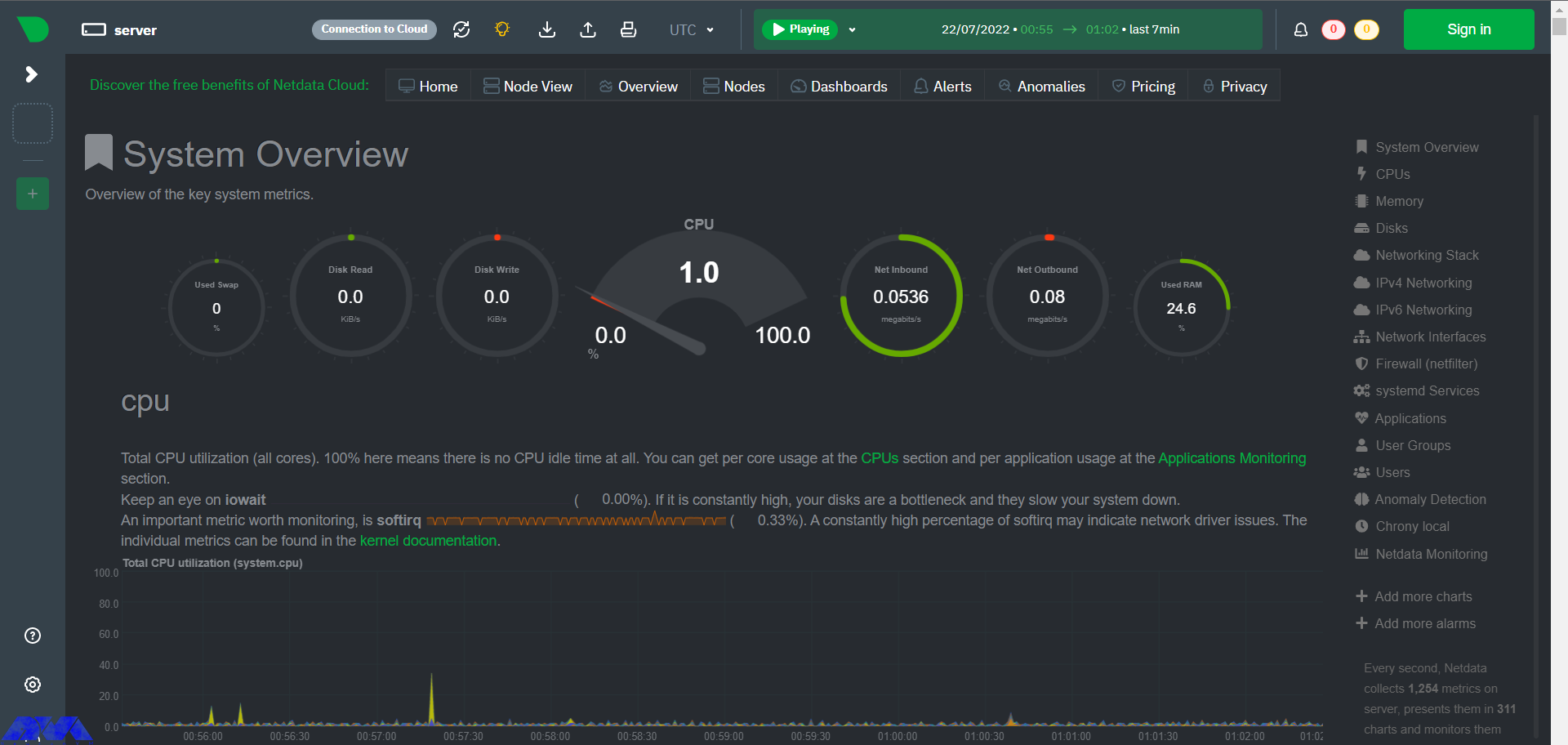

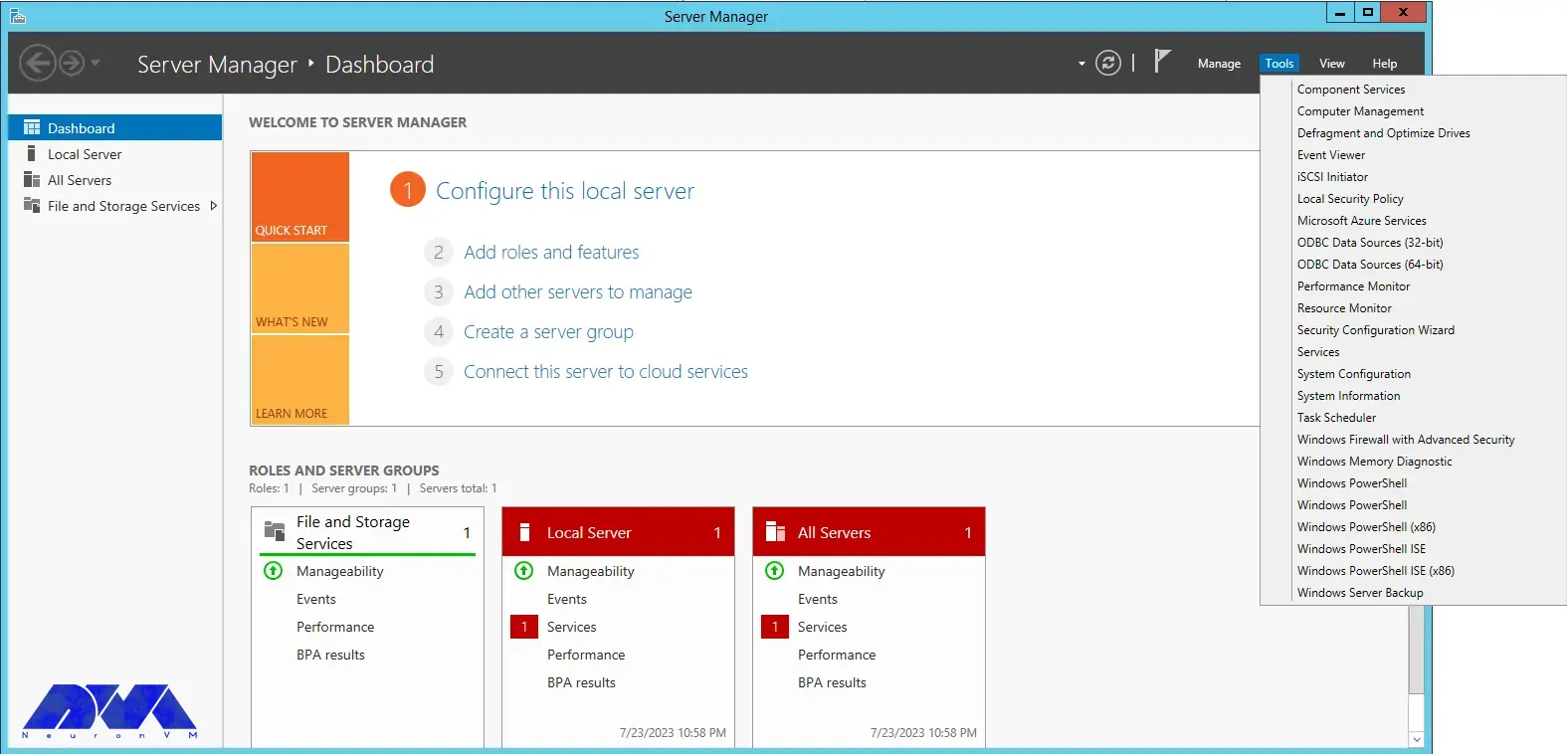

To configure the firewall, it is necessary to run Server Manager from the start menu.

Then select “Windows Firewall with Advanced Security” from the Tools menu:

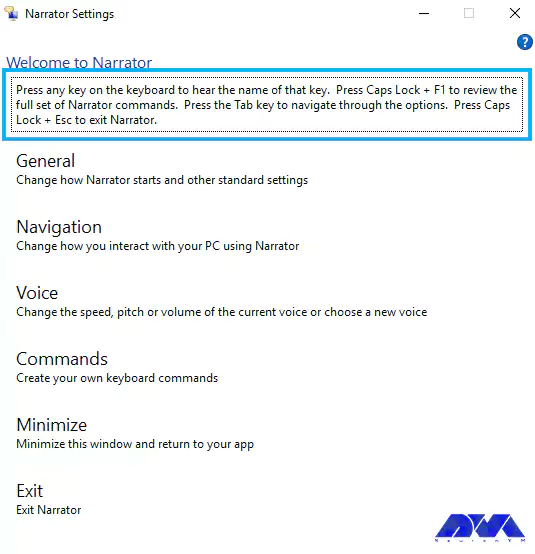

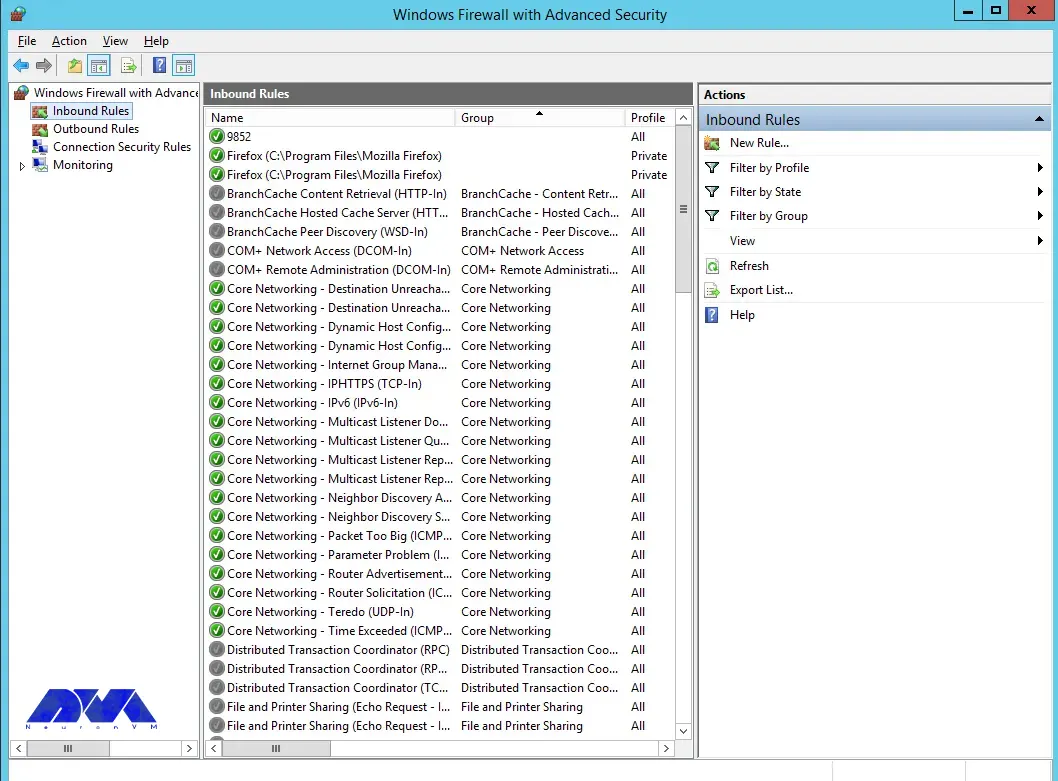

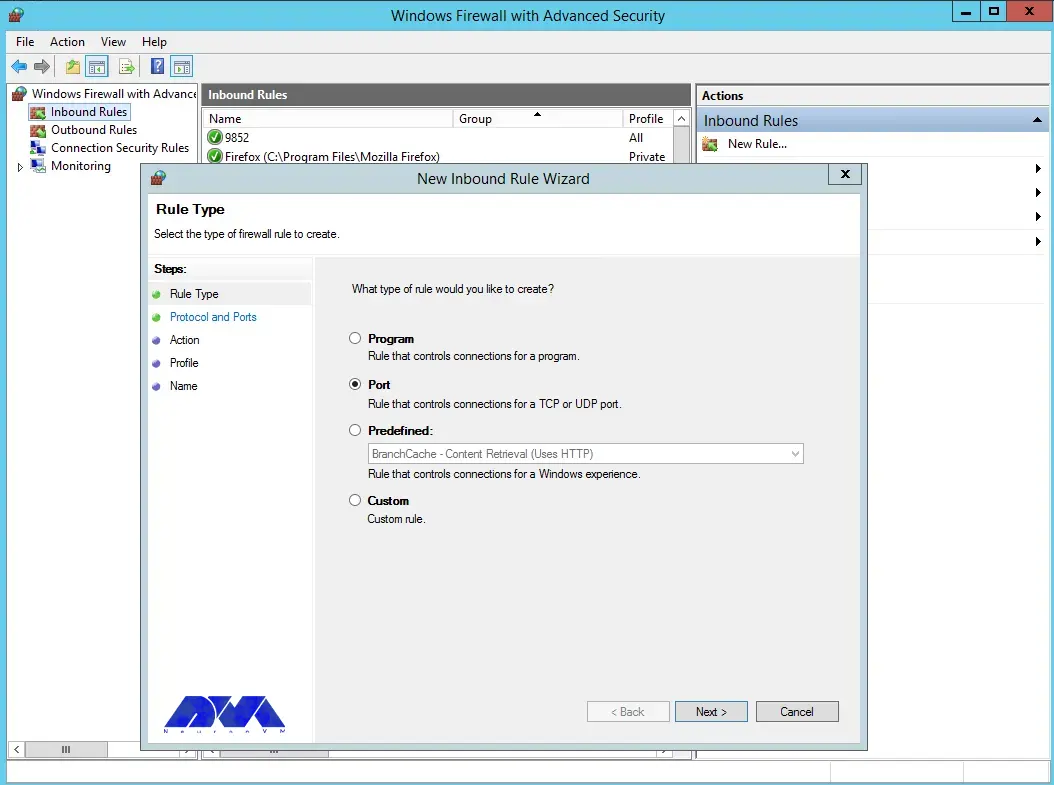

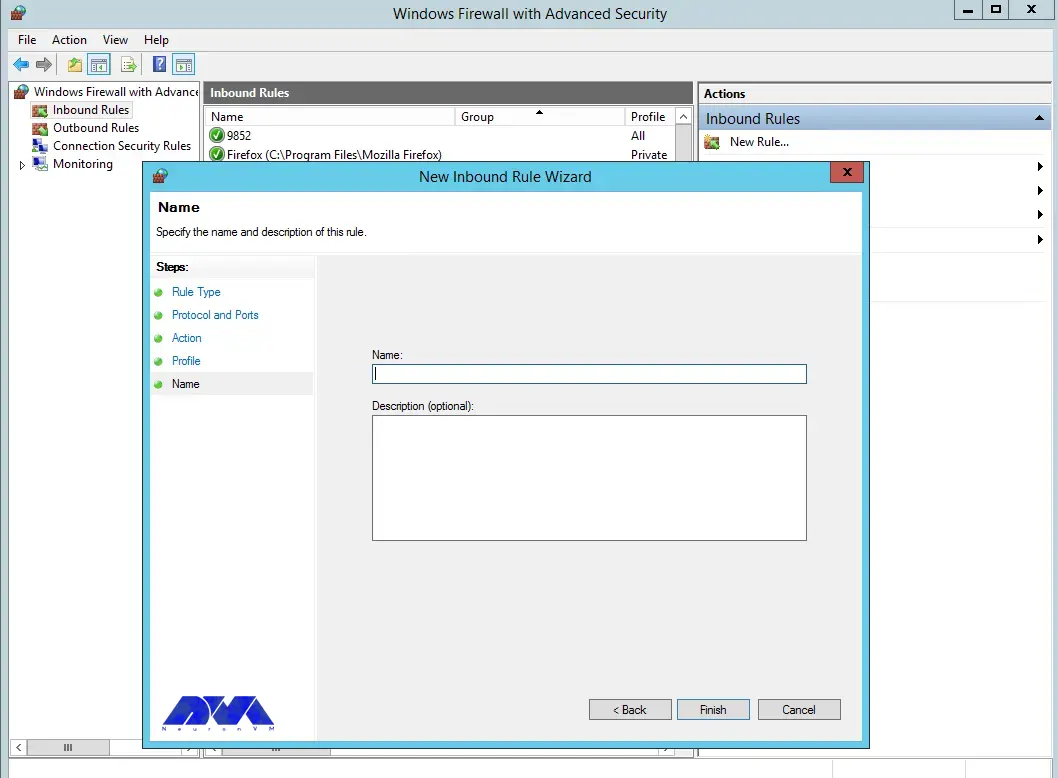

You can select the New Rule option from the Inbound Rules section:

Select the port and then click Next:

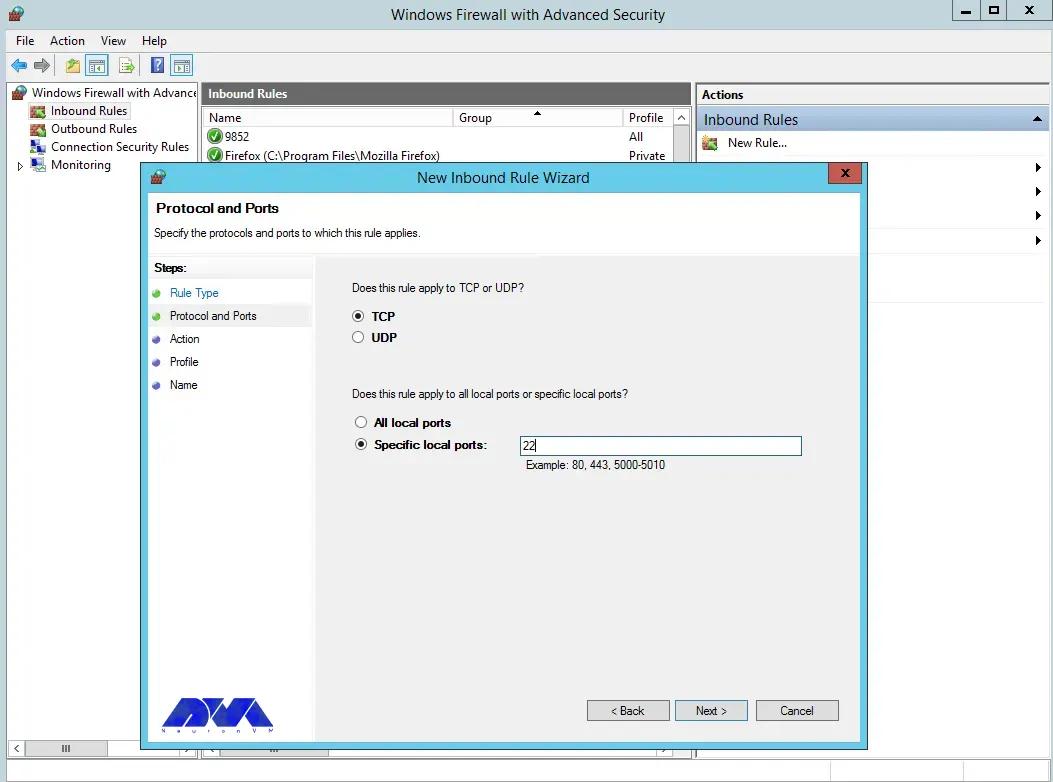

Select TCP as shown in the image below, then type port 22 and click Next:

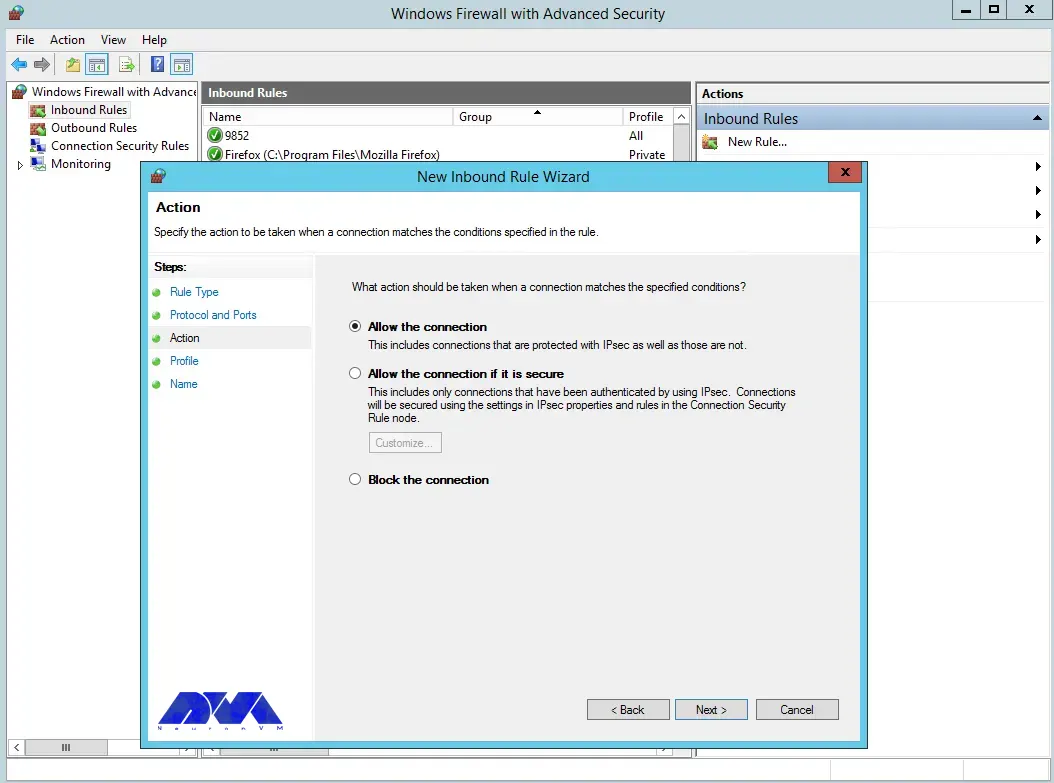

Next, you need to allow the connection:

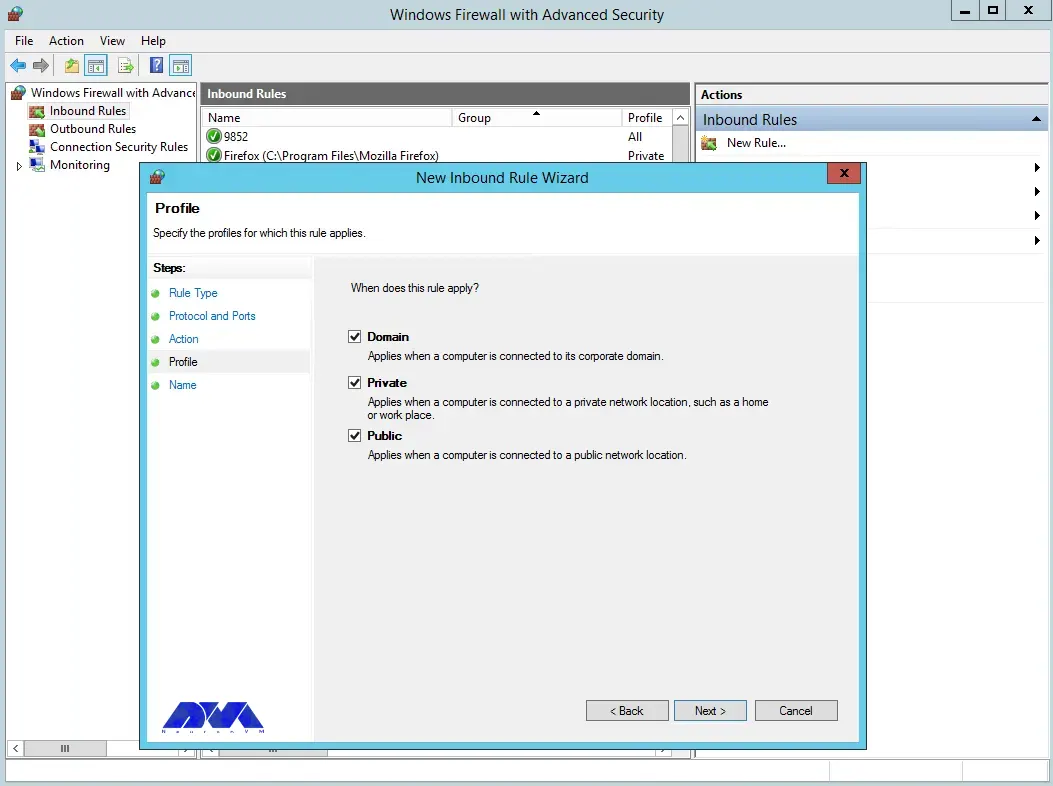

You can also assign the rule to server profiles and set a custom name for easy identification from the list of firewall rules:

In the final step, you can complete the firewall configuration steps by clicking Finish:

Using OpenSSH for secure remote access

With the help of the OpenSSH tool, you can securely connect to remote machines using SSH protocol. This tool will help you log in to the shell, copy files, enable key-based authentication, mount remote file systems, and more. Note that to use OpenSSH, you must install it on both the client and server machines.

Advanced OpenSSH security features

As mentioned in the previous sections, OpenSSH is a tool that allows you to securely connect to a remote server using the SSH protocol. OpenSSH encrypts all traffic between client and server to prevent possible attacks.

To take advantage of the advanced security features of OpenSSH, it is necessary to perform the following steps:

1) You can install OpenSSH on client and server machines using Windows settings or package manager.

2) To configure OpenSSH, open the file /etc/ssh/sshd_config and make the following settings:

PasswordAuthentication no PermitRootLogin no

3) Using a public and private key pair, you need to generate SSH keys on the client machine by running the ssh-keygen command.

4) Copy the public key to the server machine by running the ssh-copy-id command. Note that you can log in without a password by adding the public key to the ~/.ssh/authorized_keys file.

Troubleshooting OpenSSH installation and configuration issues

In this section, we are going to review and troubleshoot OpenSSH installation and configuration issues.

1) Remote Hostname Identification Error:

The first error we are going to troubleshoot is Remote Hostname Identification Error. You may receive the following error:

REMOTE HOST IDENTIFICATION HAS CHANGED

Or when an SSH host cannot connect using a specific network address, the following error may occur:

error output

ssh: Could not resolve hostname example.co: Name or service not known

Solution:

- Check the correctness of the hostname.

- Check if the hostname error can be resolved using the ping command.

- Use the IP address as a trusted solution by using ssh [email protected] instead of ssh [email protected] if you have a DNS problem.

2) Connection Timeout:

This error means that the user’s attempt to connect to a server has encountered the server’s refusal to load results within the specified time interval. Note that running the following command ssh [email protected] in OpenSSH may cause this error:

Error output

ssh: connect to host 111.111.111.111 port 22: connection timed out

Solution:

- Ensure the correctness of the IP address

- Checking the possibility of connecting the SSH port with the network

- Check that the firewall rules are not set to default.

3) Connection failure

An important point is that connection failure is different from timeout. Connection failure means that your request reaches the SSH port, but the host refuses to receive the request.

Error output

ssh: connect to host 111.111.111.111 port 22: connection refused

Solution:

- Ensure correct IP

- Ensuring that the SSH port can be connected by the network

- Check that the firewall rules are not set to default.

Best practices for using OpenSSH on Windows Server

In this section, we intend to teach you the Best practices for using OpenSSH on Windows Server.

1) Limit ssh access of users:

Given that all system users can log in via SSH using their password or public key, they have full access to system tools, including compilers and programming languages. This can open some network ports for some users. You can limit user access to allow only root, Jonnson, and Terri users by adding the following command to sshd_config file:

AllowUsers Jonnson Terri

To allow access to all users except a limited number of them, add the following command:

DenyUsers root Linda Thomas Michael

2) Disable empty passwords:

You can disable all password-based logins. Therefore, it is necessary to allow only public key-based logins by adding the following commands:

AuthenticationMethods publickey PubkeyAuthentication yes

3) Disable root user login:

In this section, we want to tell you how to disable the root user login. First, you need to make sure that the normal user can log in as root. For example, let the user Jannson log in as root using the sudo command:

On a Debian/Ubuntu:

sudo adduser Jonnson sudo

id Jonnson

On a CentOS/RHEL/Fedora:

sudo usermod -aG wheel Jonnson

id Jonnson

Now you can test sudo access and disable root login for ssh by running the following commands:

sudo -i

sudo /etc/init.d/sshd status

sudo systemctl status httpd

Finally, disable root login by adding the following line to sshd_config:

PermitRootLogin no ChallengeResponseAuthentication no PasswordAuthentication no UsePAM no

4) Disable password-based login:

To disable password-based login, you should add the following commands to the sshd_config file:

AuthenticationMethods publickey PubkeyAuthentication yes

5) Use SSH public key-based login:

For public key-based authentication, it is necessary to generate the key pair in the first step using the following commands:

ssh-keygen -t key_type -b bits -C "comment"

ssh-keygen -t ed25519 -C "Login to production cluster at xyz corp"

ssh-keygen -t rsa -b 4096 -f ~/.ssh/id_rsa_aws_$(date +%Y-%m-%d) -C "AWS key for abc corp clients"

Finally, install the public key using the following commands:

ssh-copy-id -i /path/to/public-key-file user@host

ssh-copy-id user@remote-server-ip-or-dns-name

ssh-copy-id jannson@rhel7-aws-server

Check that ssh key-based login works for you by running the following command:

ssh jannson@rhel7-aws-server

Alternatives to OpenSSH for Windows Server security

In this section, we intend to tell you the best alternatives to OpenSSH for Windows Server security in 2023. These alternatives are:

1) SecureCRT: SecureCRT is software for terminal access to network devices and servers. This software can be used for Windows, Mac, and Linux operating systems. In addition, it provides a suitable environment for professional work with terminals along with increasing productivity, advanced management of sessions, and saving time by not doing repetitive tasks!

2) Mobaaxterm: MobaXterm software is the best toolbox for remote computing. This program on the Windows operating system offers many functions designed for programmers, webmasters, IT managers, and almost all users who need to do their remote work in an easier way.

3) PuTTY: PuTTY software is a terminal emulator and file transfer program developed as free software for Windows. But it has also been ported to other operating systems. This program supports several different protocols including Serial, SSH, Telnet, Raw, and rlogin.

4) Remmina:

Remmina software is one of the useful tools for connecting to remote machines through the network. This software has the ability to support several protocols, which have a plug-in for each of them. The protocols that Remmina software supports are as follows:

- RDP (Remote Desktop Protocol)

- VNC (Virtual Network Protocol)

- Telnet

- SSH

- NX

- XDMCP

5) mRemoteNG: mRemoteNG is a multi-tab remote connection manager. This tool is also a central tool for managing communications to remote systems. mRemoteNG has many features including the ability to manage multiple types of connections. In addition to RDP, this tool also supports other protocols including VNC, ICA, SSH, Telnet, RAW, Rlogin, and HTTP/S.

The tab feature is perfect for when you have multiple sessions open and need to move between them. Other features of this software include simplicity in organizing communications, saving password information for automatic login, importing from Active Directory, full-screen mode, ability to group folders.

Conclusion and next steps

OpenSSH is the SSH service protocol. OpenSSH is recommended for remote login, backup, remote file transfer via scp or sftp, and much more. SSH is the best way to keep confidential and complete information and data exchanged between two networks and systems. However, its main advantage is server authentication through the use of public key encryption.