One of the most powerful operating systems offered for servers is Windows Server 2019. This operating system is a supplementary version of the previous version and has added new features. But what are these new features? Windows Server 2019, which is based on the previous version, has undergone changes and optimization in various parts, and these changes have been made in the security, application platform, and hybrid performance sections. In this article, we fully explore the New Features of Windows Server 2019.

Key features and improvements in Windows Server 2019

In the main appearance and interior of Windows Server 2019, two major changes have been made, the first is Desktop Experience and the second is System Insights. The first feature is essentially the Windows Server appearance changes that have been created to improve customer satisfaction and users can choose to create new appearance changes for Windows Server 2019.

The second change, called System Insights, is a new feature built into Windows Server 2019. This feature analyzes your server data and evaluates everything that happens on your server, and gives you a report so that you can optimize your server. This feature can identify and report all the weak points of the server.

We recommend you choose and buy a plan according to your needs from the Windows VPS server plans provided on our website. After installing Windows Server 2019 on these servers, you will see the excellent performance of these servers. In the continuation of this article, we will fully review the key features of Windows Server 2019.

Enhanced security measures in Windows Server 2019

Windows Server 2019 has introduced a series of special platforms called Windows Defender ATP for more server security. This platform has 4 new features which are as follows:

1) Attack Surface Reduction: This feature, which is a set of instructions, identifies any corrupted files, emails containing corrupted attachments, and strange behavior of servers and ransomware and prevents them from penetrating the system and server.

2) Network protection: This feature also detects and blocks any anonymous or invalid IPs from the web.

3) Access to files is controlled: Critical data of the server and devices are protected by this new feature to prevent the penetration of programs such as ransomware.

4) Protection against security holes: A series of instructions have been designed for this platform to protect and prevent security holes. Note that you can activate this feature manually.

But the security optimizations are not only limited to the platform, these optimizations are also included in the virtualization section. In the previous versions, the troubleshooting problems were a bit too much and exhausting, but in Windows Server 2019, these problems have been solved and users can solve virtualization problems. On the other hand, these changes do not need to be adjusted manually and can be done automatically. Finally, if users want to have a mixed environment of the operating system, Windows Server 2019 can support Ubuntu, Linux, and Red Hat Hamel systems.

Improved performance and scalability in Windows Server 2019

Another benefit of Windows Server 2019 Standard is that it is highly scalable, meaning it can grow with your business as your server needs to grow. In addition, the platform also offers excellent performance, ensuring that your applications and systems run fast and smoothly.

One of the features of Windows Server 2019 that has improved performance and scalability is support for hybrid environments. Windows Server 2019 is designed to run in both on-premise and cloud environments, allowing enterprises to make the most of available resources and adapt to changing business needs.

Next is storage optimization. With Storage Spaces Direct (S2D), companies can easily group storage units into a single pool. Interestingly, this feature will improve storage efficiency and performance. In addition, the data deletion and compression feature reduces the space required for data storage.

It is interesting to note that Windows Server 2019 introduces network virtualization improvements. Such as hardware acceleration and support for container-based virtualization, which improves application performance and network efficiency.

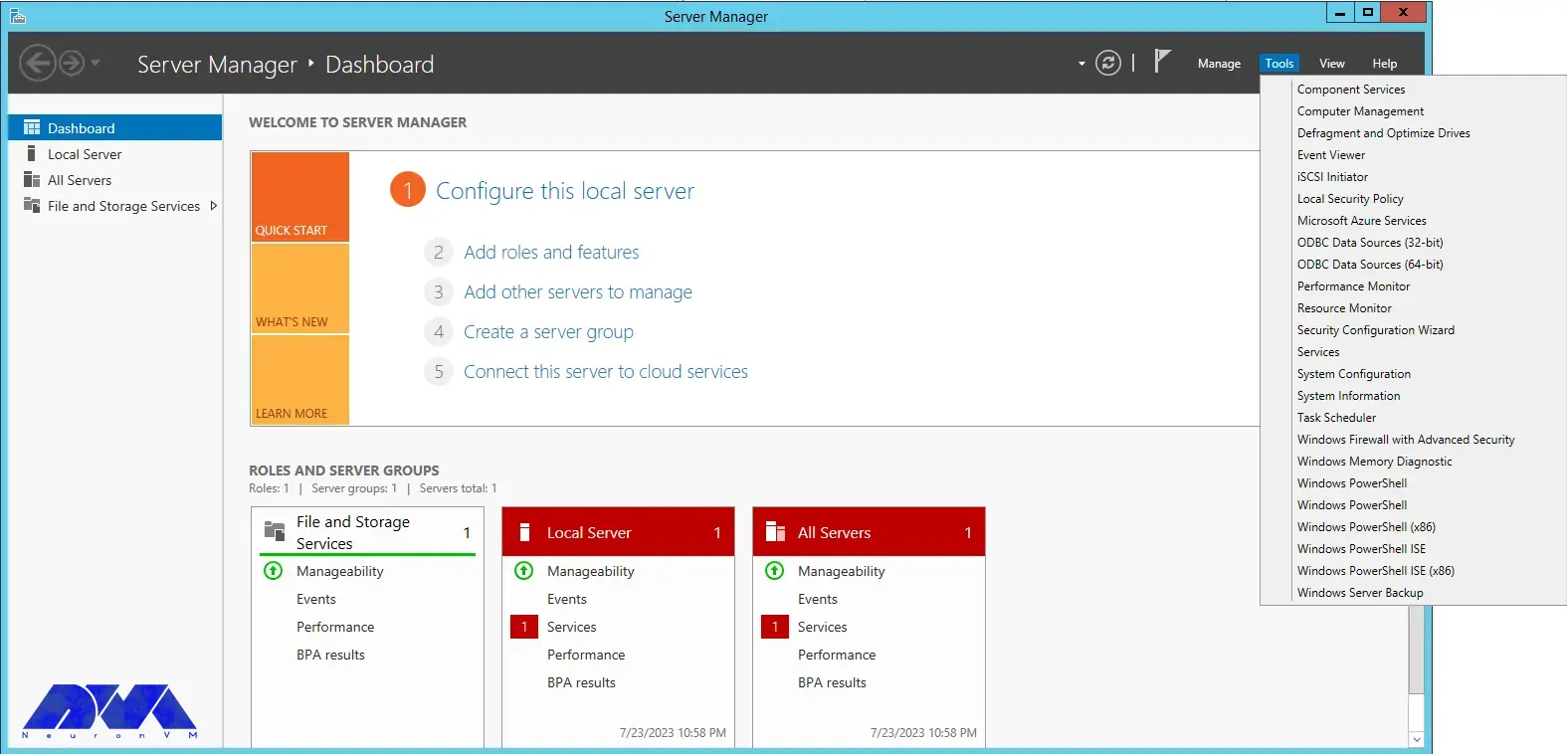

Windows Admin Center: A powerful tool for managing Windows Server 2019

Server management is a very difficult task and many risks threaten it, so to reduce risks and simplify management, it is better to use a tool called Windows Admin Center, which has many features. Windows Admin Center installed on an internal server can manage standard Windows 2019 servers. It can also manage HyperV R2 and higher servers, Windows Server Core, Hyper-Converged systems, or Azure.

Windows Admin Center can increase the speed of doing things with the ability to personalize dashboards. This tool offers a modern view of monitoring, using which you can change the design of dashboards, put them in different sections, and separate the charts in them from each other. Each of these dashboards is a workspace where information can be saved and shared.

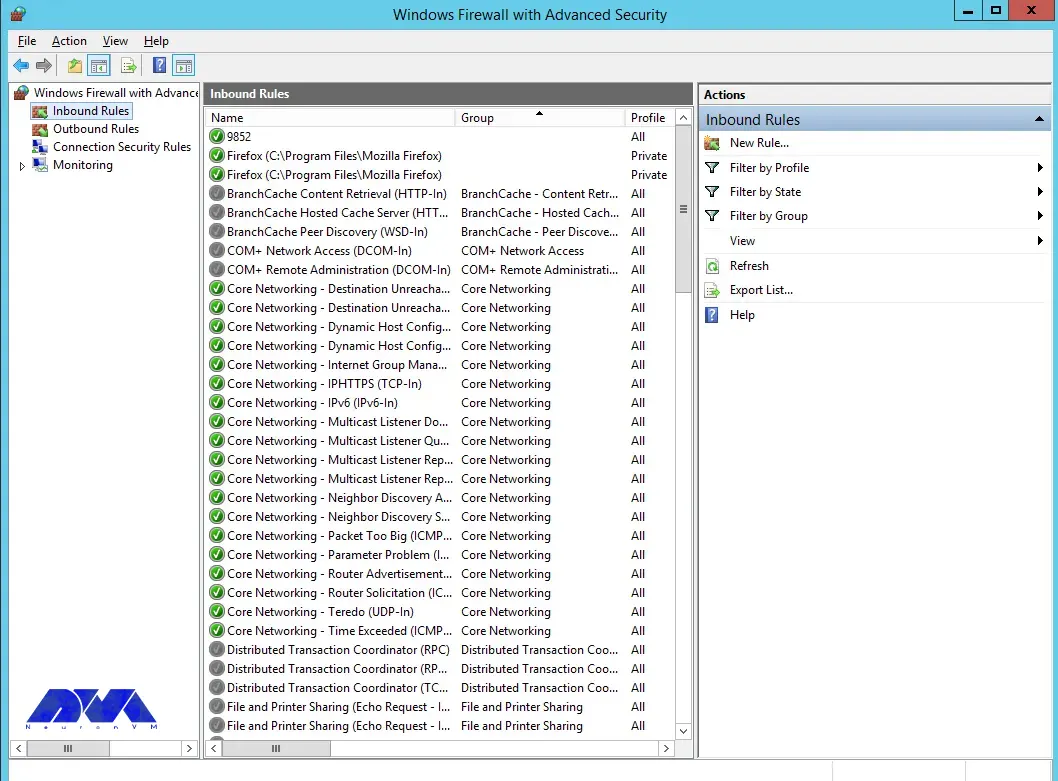

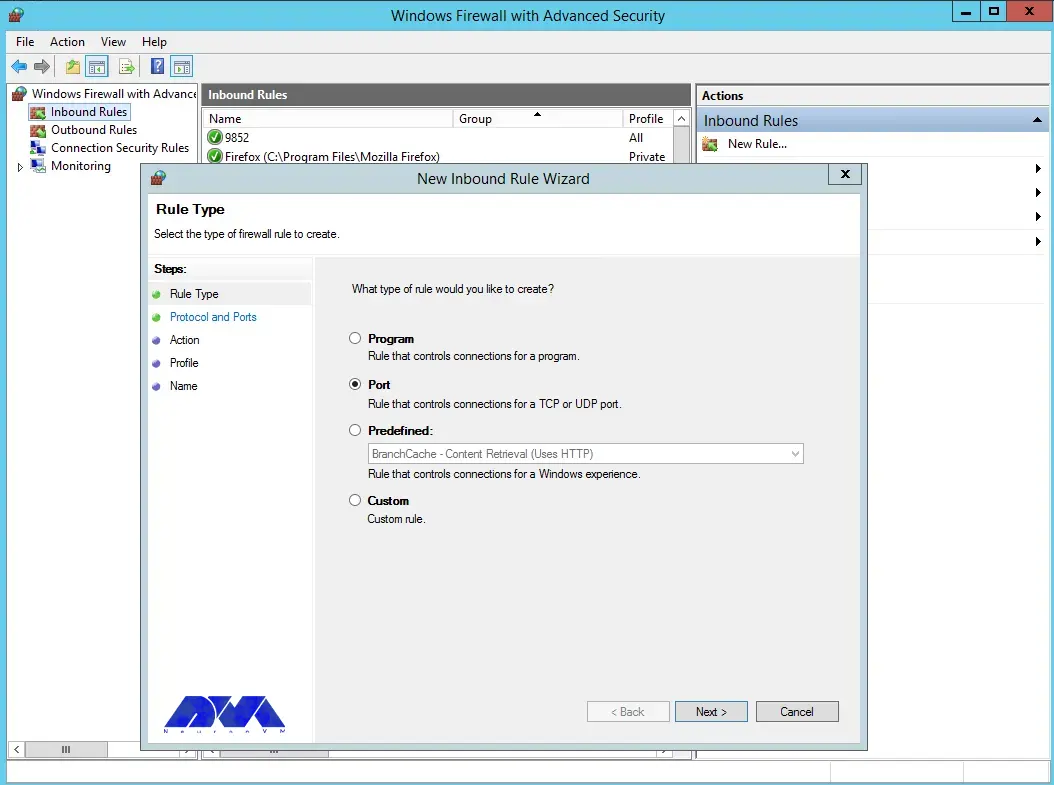

There are always tasks that require access to the server console, and in Windows Admin Center, the Remote Desktop feature has been placed to do this, which can be used through a browser. The interesting feature of this tool is to access the console of each managed server, without the need to open additional ports in the firewall. All engine traffic goes to Windows Admin Center through HTTPS protocol and is encrypted on the way.

Accessing files from Windows Admin Center has become a trivial matter. You can do things like create new folders, rename or delete files, upload and download files, cut, copy, paste, and even extract archives. Apart from these simple and routine things, you can also set file sharing, set file sharing permissions, and create and manage files. Also, with Admin Center, you can perform disk management, including formatting and resizing, creating and attaching VHD files, and saving information on disk and server.

Hybrid cloud capabilities in Windows Server 2019

A hybrid cloud is a combination of one or more public and private clouds. A hybrid cloud is a collection of virtual resources. These resources are powered by hardware that is owned, managed, and organized by a third party. Resources in the hybrid cloud are provided to a customer in a dedicated manner. These computing and storage resources are automatically provided and allocated through a self-service user interface.

Interoperability is the fundamental basis of a hybrid cloud. Without it, the public cloud and the private cloud can exist independently of each other, but they are not considered hybrid clouds. Even if they are used by a company or organization. Hybrid clouds include multiple connection points, and software services integrated into the core allow resources, operating systems, and applications to move across the environment.

Nowadays, it is impossible to imagine an IT environment without virtualization and hybrid cloud. Therefore, in Windows Server 2019, Microsoft has improved the connection between the Azure cloud platform and the Windows Server operating system. This connection is not only limited to the Admin center, but the Azure network adapter also provides the possibility of connecting to the cloud computing platform. In addition, the Windows Server 2019 release includes better support for Azure Backup, File Sync, Disaster Recovery (DR), and other Azure services.

Cloud management tools provide you with one-piece platforms for managing hybrid clouds. Thus, they free you from manual management of the hybrid environment using management and planning tools for multiple implementations and additional expert operators. These single-fabric platforms encapsulate the core technologies and centralize management tasks so that operators and users can control the system lifecycle, automated services, automation, policy enforcement, and costs when deploying services.

Containerization and virtualization advancements in Windows Server 2019

The interesting thing about Windows Server 2019 is that it supports both Windows and Linux containers that can run on the same container host. In addition, Windows Server 2019 includes built-in support for Kubernetes, which can significantly improve container networking. Additional container improvements include integrated Windows authentication in containers, improved application compatibility, and reduced size of base container images. These Hyper-V features can increase the speed of container workflows, make containers more secure and reliable, and ensure the efficiency of container networks.

Similar to the way Linux containers share host operating system kernel files, Windows Server containers do so in a similar way. In other words, while namespaces, filesystems, and network isolation are enforced to isolate containers from each other, vulnerabilities can exist between different Windows Server containers running on the same host. For example, if you want to log into the host operating system on your container server, you can see the processes running on each container.

The container is not able to see the host or other containers and is still isolated from the host in various ways, but knowing that the host can see the processes inside the container tells us that some interaction with the host may be shared. Windows Server containers are useful in situations where the server hosting the container and the container itself are in a secure domain and trust each other. Windows Server Containers are more useful for servers that are owned by the company and the company itself can manage them. If you trust your host server and container, using Windows Server containers provides the most efficient way to use hardware resources.

Upgrading to Windows Server 2019: Considerations and best practices

To upgrade to Windows Server 2019, you must log in as an administrator of the server you want to upgrade.

Then, in the next step, you need to insert the Windows Server 2019 DVD or install the installation ISO.

In the third step, you can go to the root of the installation media and double-click on setup.exe. After doing this, you will see the Windows Server 2019 setup window appear.

Now you can follow the steps in the wizard. Pay attention to the following:

Tip: If you are upgrading from a DVD, you may be prompted to boot from the DVD. You can let the request time out and the upgrade will continue.

When the upgrade is finished, a screen will be displayed that the settings are being finalized. When the upgrade is complete, you will be presented with the Windows Server 2019 login screen.

Case studies and success stories of organizations using Windows Server 2019

Windows Server 2019 is a version of Windows built. It is designed to meet business needs such as access control, data management, cloud integration, and virtualization. It comes in three editions: Datacenter, Essentials, and Standard, each suitable for different use cases and environments. Here are the success stories of many organizations using Windows Server 2019 to improve their performance, security, and efficiency.

1) ZDNet reviewed Windows Server 2019 and praised its features, particularly its improvements in security, hyper-converged infrastructure, and hybrid cloud. They also noted that Windows Server 2019 provides a solid foundation for future data center advancements, including edge locations.

2) Microsoft published a case study of Coles Group, an Australian retailer that migrated to Windows Server 2019 to modernize its IT infrastructure and reduce costs. Coles Group reported that Windows Server 2019 helped them achieve faster deployment, better scalability, increased security, and easier management.

Conclusion: The future of Windows Server 2019 and its impact on businesses.

Windows Server 2019 is another Microsoft operating system designed for servers. It can be used by large information centers of the world or even small companies. Windows Server 2019 has provided new and advanced features for users in the field of virtualization, network, storage, user experience, cloud computing, automation, etc. In simple words, Windows Server 2019 helps you to do your company’s IT affairs much easier and at a whole new level along with reducing costs. Businesses that are currently using Windows Server 2019 in their business receive a very positive impact compared to other operating systems. Because Windows Server 2019 has been able to perform better than other competitors in online businesses.